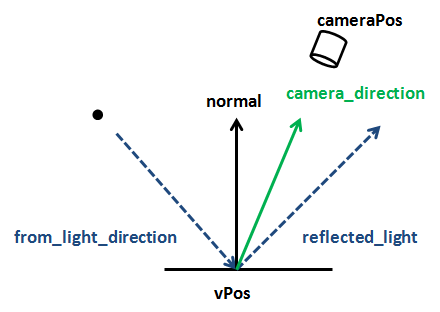

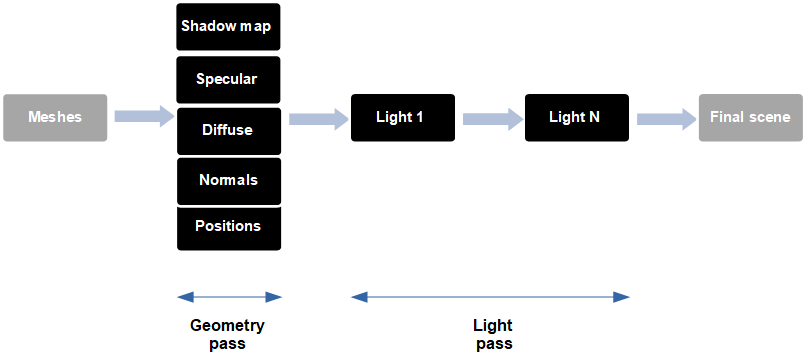

games) will be using the basic methods described above using shaders to achieve their effects. Almost all high-end 3D graphics applications (e.g. #OPENGL JAVA LWJGL FRAGMENT SHADER WITH LIGHTING FREE#This fact, combined with the data flows we've already covered gives you the ability to harness the power of a massively parallel computing unit: the GPU.īecause of the flexibility afforded to you by the OpenGL ES 2.0 API you're free to implement whatever graphical effects you can imagine. OpenGL ES shaders also allow you do general purpose maths (including lots of hardware accelerated built-in functions). Well, this is really only the ground layer. It might not have sold you on why you would want this, or why should you care. We've focused on very trivial examples here just to expose the data flows in OpenGL ES. This coordinate can then be used to look up the correct point in the image. If we specify where in the image each vertex maps to (using an attribute), the rasterizer will interpolate the coordinates nicely for us to give us a per-fragment varying. How does each fragment shader instance know where to look in the texture? Good question, but we already have all the knowledge we need to solve this problem. Textures allow us to specify arbitrary arrays of data which can be accessed in the fragment shader. Luckily for us OpenGL ES has this covered too. We also can't use attributes and varyings since those are per-vertex and then interpolated which doesn't make sense for an image. We can't use uniforms since we don't typically have enough to store all the pixels in an image(not to mention that setting and accessing the data would be awful). For example, what if we want to display an image on a triangle. There's one last type of data that we could need, per-fragment data that is not interpolated from per-vertex data. More differences between attributes and uniforms are listed in Appendix: Attributes vs. #OPENGL JAVA LWJGL FRAGMENT SHADER WITH LIGHTING DRIVER#You provide the source code for you shaders to the OpenGL ES API at runtime and the OpenGL ES driver uses a compiler on the device itself to compile your code for instruction set of the device. Thankfully this is possible in OpenGL ES using "online" compilation. Because each GPU vendor (and sometimes each GPU) has a different instruction set we need a way to compile the shaders for every different device. Since shaders are C-like programs which run on a GPU, you may be thinking, "how do I compile these shaders?". In other words, it's all or nothing when choosing fixed function vs. It's worth mentioning that OpenGL ES 1.x and OpenGL 2.0 onwards cannot be mixed and OpenGL ES 2.0 is not backwards compatible. This means that features like lighting, texturing, and others that are "free" (in terms of developer effort) in OpenGL ES 1.1 must now be programmed explicitly by the developer. Since there are no default shaders in OpenGL ES 2.0 you must always write your own. Of course, all this freedom comes with a price. Each piece of code is called a shader, and since they can contain arbitrary code it means the developer is free to do (almost) whatever they like. A programmable pipeline allows the developer to provide custom code that is run on the GPU at various stages of the pipeline. Instead of creating hundreds of extensions and functions to support all requested use cases, programmable pipelines were created. That's where fixed function graphics pipelines fall short. And although the API can be extended to support more options and more techniques, you're then dependent on GPU vendors implementing those extensions. This is never going to cover all the things you might want to do as a developer. You're very dependent on the contents of the API there are a fixed set of functions and those functions have a limited number of options. However, what happens when you want to use a different lighting equation that the one built in to the API? What if you come up with a great new graphics technique but the API doesn't support it? (e.g. This is great for simple scenes and allows you to very quickly achieve some very nice looking graphical effects.

The GPU will then go a away and calculate what colour the polygon will appear in your scene. A fixed function graphics API allows you to specify various bits of data (positions, colours, etc.) for the GPU to work on, and a strict set of commands for using that data.įor example, you can tell the GPU that you have a polygon in your scene, specify the reflectance of the material for that polygon, and add a light to the scene.

OpenGL ES 1.x is a fixed function mobile graphics API.

What does this mean? OpenGL ES 2.0 was the first OpenGL ES version to support a programmable graphics pipeline. #OPENGL JAVA LWJGL FRAGMENT SHADER WITH LIGHTING SERIES#This series of tutorials is going to focus on programmable graphics APIs. A quick introduction to the programmable graphics pipeline introduced in OpenGL ES 2.0.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed